Before AI builds your models

Should you roll the dice on rules?

Standards can be leveraged to produce cutting-edge AI performance in financial modelling

The experiment

2025 saw an onslaught of AI-for-spreadsheet tools hit the market. Every single one that we saw highlighted AI-driven sheet formatting as a feature.

The 2021 Financial Modelling Survey highlighted that 95% of professional modellers believe standards are important (Full Stack Modeller, 2021). Modelling standards define rules for structure, formatting, formulas, and techniques applied in the workbook. They are important frameworks for reducing errors, ensuring consistency, training analysts, and improving communication. Every elite modelling team in the game follows standards, either contributing to open-source frameworks or deploying their own in-house strategies.

Modelling standards are deterministic, rule-based systems.

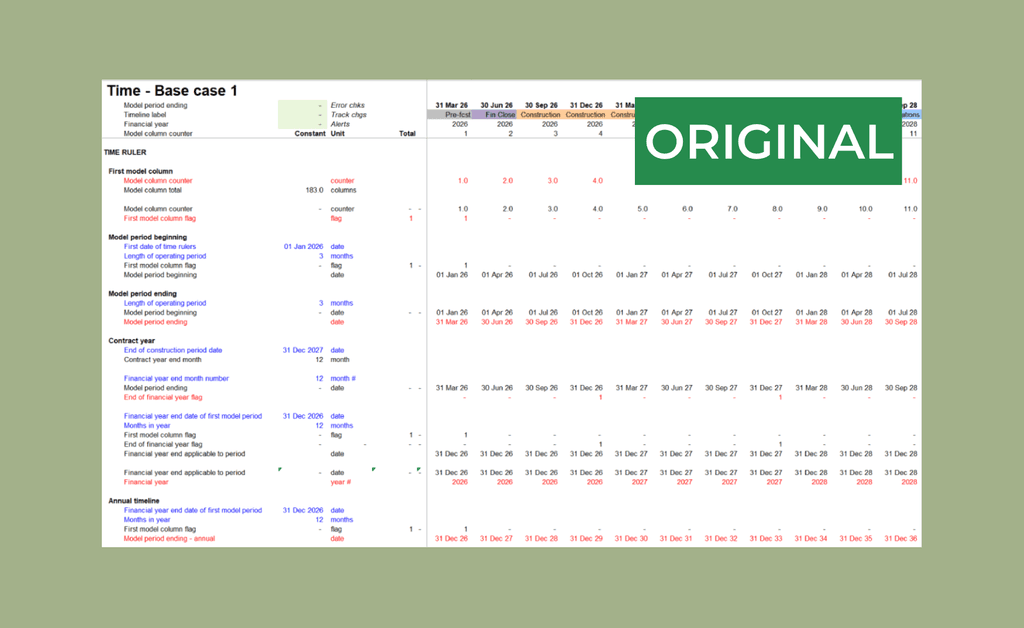

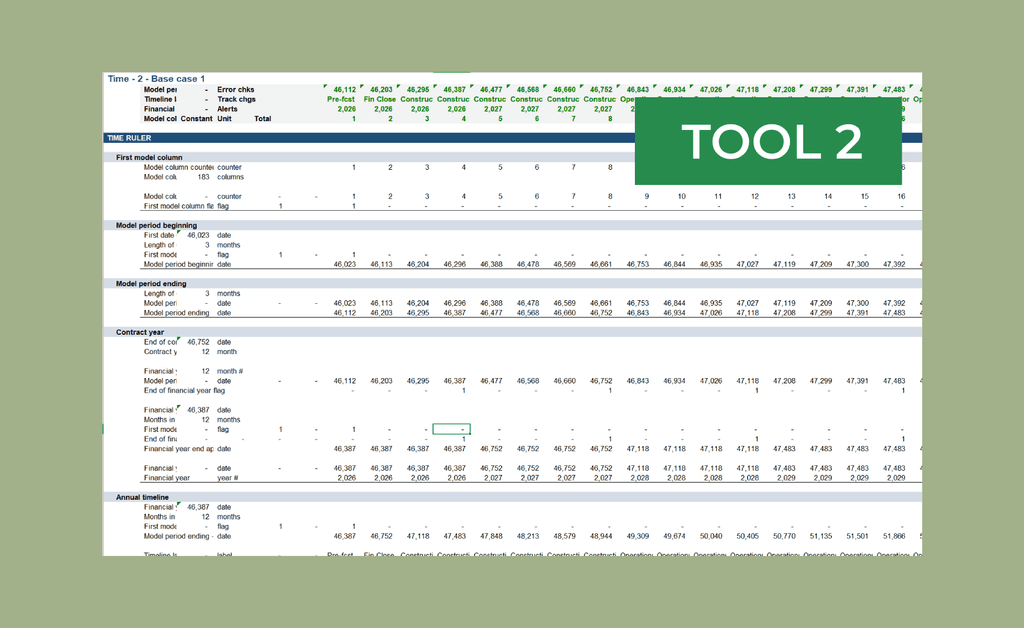

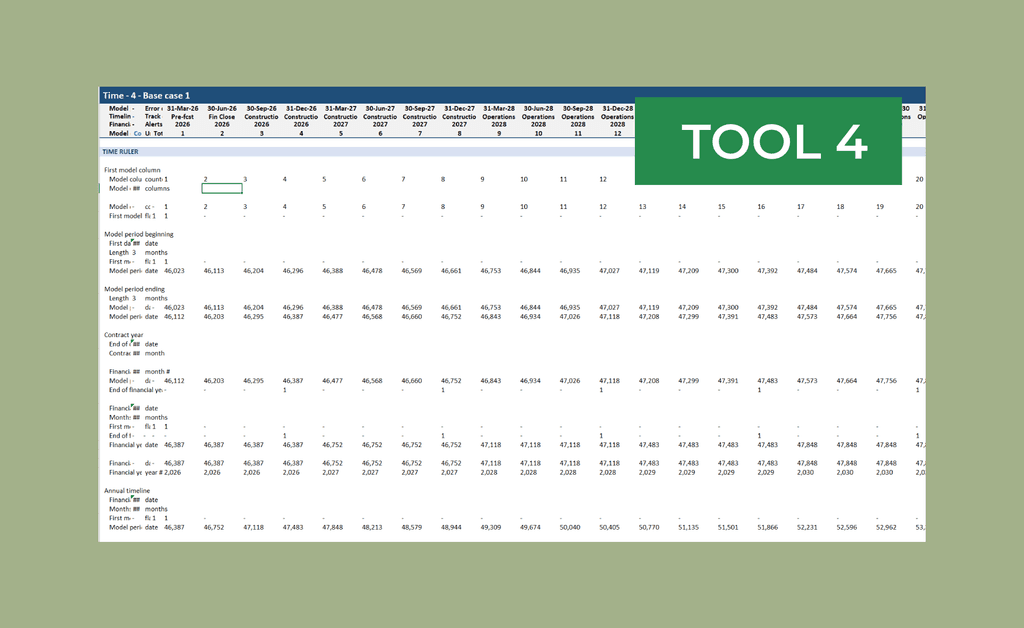

We asked four leading Excel AI agents to apply FAST-standard formatting to an unformatted sheet; here are the results:

This is not a performance issue

The important takeaway here isn't that every tool failed to apply FAST-standard formatting, or that the results were completely different.

This is not a performance issue, it’s a misclassification.

Large language models are inherently probability-based systems. They excel in situations where judgement is required, achieving tasks that traditional software could never dream of. By definition, applying professional standards requires zero judgement, that's kind of the point.

If any software enthusiasts are reading this, this exercise is broadly akin to asking an AI agent to apply syntax highlighting to your code:

If you're not a software engineer, syntax highlighting is the colour-coding applied to your code by your development environment. A set of rules define the colours for different tokens (words) in your code; keywords are one colour, strings another, comments another etc. This is entirely deterministic, so colours can be switched to match developer preference.

No developer would accept that a keyword might be highlighted correctly. Nor would they entertain a conversation about the increased likelihood of correct highlighting due to performance improvements. The problem was solved decades ago and never required intelligence.

Most professional modellers already understand this intuitively. Firm-wide macros typically automate in-house formatting at the push of a button. For these teams, replacing guaranteed consistency with an AI formatting tool is a downgrade from their current position.

What are standards protecting

We used formatting as our example, but formatting is just one small slice of what modelling standards cover. Workbook structure, worksheet layout, line-item design, formula rules, naming conventions, sign conventions, and more.

Standards exist so models can be audited, built consistently, change hands quickly and deliver maximum end user value. If we lose our standards, we threaten the integrity of our highest-stakes decision driving artifacts.

Auditability disappears when the same standard is applied inconsistently. If calculation layouts are unpredictable, conventions are mixed within the same model, or formatting is random, the reviewer has to re-learn every model, losing the advantage of their experience.

Build slows down when you can't trust that templates and components will behave predictably. If fundamental model building blocks are different every time, then we must start our understanding from scratch every single time.

Collaboration fragments when two analysts on the same team produce models that look structurally different. Where we previously had a shared, understood language, we now create our own silos with high barriers to understanding.

The artifact loses value because it longer carries the decades of institutional knowledge embedded in the standard. It's just a spreadsheet that happens to be formatted to look nice.

These are real issues that point to the usability of the model. An even more challenging problem exists in validation. "The AI made a decision to do it like this" is not an argument that will be acceptable in an investment committee underwriting a $1bn project, nor should it be.

AI-formatted models can look professional, but visual appeal is largely irrelevant in conversations surrounding risk allocation. Two models produced by the same team, using the same AI tool, having subtly (or not so subtly) different core infrastructure will undermine the entire process.

Deterministic vs judgement: know the difference

Colin Jarvis, lead FDE at OpenAI, described his team's approach to rules: the goal is always to maximise non-LLM-driven determinism, putting in "guard rails" that increase trust in AI outputs (Altimeter Capital, 2025). He notes this is a consistent feature of how OpenAI's FDE team approach any new problem, particularly when the stakes are high.

This approach has benefits beyond increased reliability; by decreasing the scope of judgement layer, we focus the agent, increasing performance on the more important tasks.

This is not just a question of tooling; high-performance financial modellers will need a good understanding of the separation between deterministic and judgement-based parts of their workflow. Here is an example inspired by the open-source FAST standard (The FAST Standard Organisation, 2019).

Deterministic (rules) | Judgement (intelligence) |

|---|---|

Workbook flow | Business logic |

Header design | Input assumptions |

Colour coding | Interpreting commercials |

Calculation layout | Naming unique calculations |

Standardised calculations | |

Formula usage | |

Sign conventions | |

Scenario management | |

Number formatting | |

Line-item design |

The more items we can shift into the left column, the higher the performance of our agents. Items are shifted to the left through:

Creating rules through centralised, documented standards

Using tools that can enforce those rules

Using AI to solve everything dilutes the value of our established practices. Instead, we can leverage our existing IP to maximise AI performance.

What structural enforcement looks like

Rather than auditing compliance after the fact, we can enforce compliance by design.

Python doesn't check for indentation after the fact. You can't run non-indented Python - it's a language-level requirement. We can apply the same principle to financial modelling: the standard becomes the medium rather than a layer applied as an afterthought.

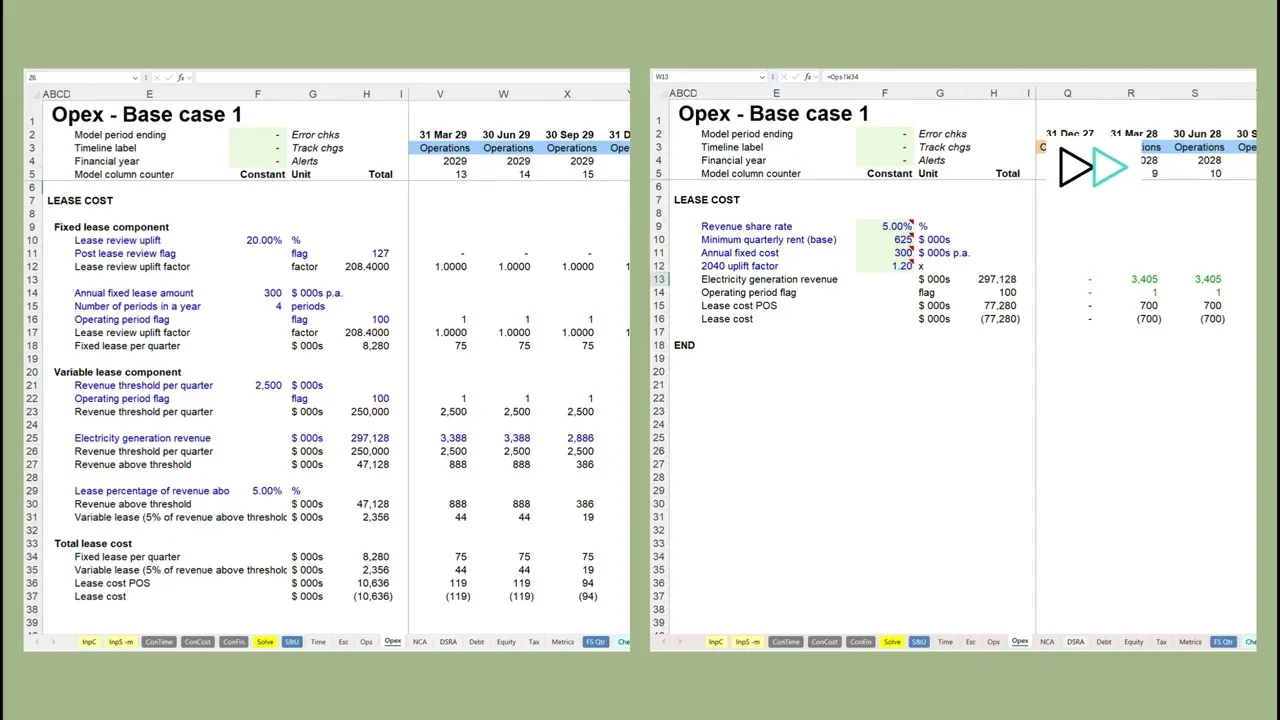

In the following video, we request a build of the following lease structure:

$300k per annum base payment, with an assumed 20% uplift after a review in 2040

5% of revenue above $2.5m in each quarter

On the left, we enforce FAST build standards using Cellori, asking the agent to just provide the required business logic. On the right, we allow the agent access to everything (formulas, formatting, structure etc.) via a leading AI-in-Excel agent.

Some clear observations:

Enforcing standards results in a clearer, more auditable calculation, consistent with the rest of the model.

Applying model structuring with software is considerably faster than with AI. The original right-hand video was over 2 minutes before we sped it up.

The agent on the right made an error, but since all of the logic is built into one complex formula (not allowed in the FAST standard), it is very hard to see. Perhaps you can spot it in the formula below.

=J$14*(MAX($F$9*J$13,$F$10*IF(J$4>=2040,$F$12,1))+($F$11/4)*IF(J$4>=2040,$F$12,1))

We'll wait…

The point isn't whether you found it or not... you shouldn't have to look. When standards are enforced structurally, this category of error doesn't exist. The calculation is broken into visible, labelled steps. Each component can be checked visually and independently. The standards prevent the complexities that bury mistakes.

The right questions to ask

Before you implement AI in your financial modelling workflows, don't consider how well it can apply professional standards. Ask whether that is something that should be left to chance at all.

Consider:

How can we guarantee consistency across our future models?

Can we enforce our standards by design?

How simple can we make this problem for our agents?

If your team already values standards, they represent an advantage in your AI deployment. Use it.

Before AI builds your models - navigate series

Spreadsheets are not ready for AI [unpublished]

AI makes inconsistency easy [unpublished]

Spreadsheet modelling doesn't happen in spreadsheets [unpublished]

Model review was impossible. AI made it worse. [unpublished]

Can you prove an AI-built model is correct? [unpublished]

Excel is the perfect place to bury a mistake [unpublished]

Your model is doing too much [unpublished]

Escaping the spreadsheet [unpublished]